CNBC, Axios, Bloomberg, the Wall Street Journal contributed to this report.

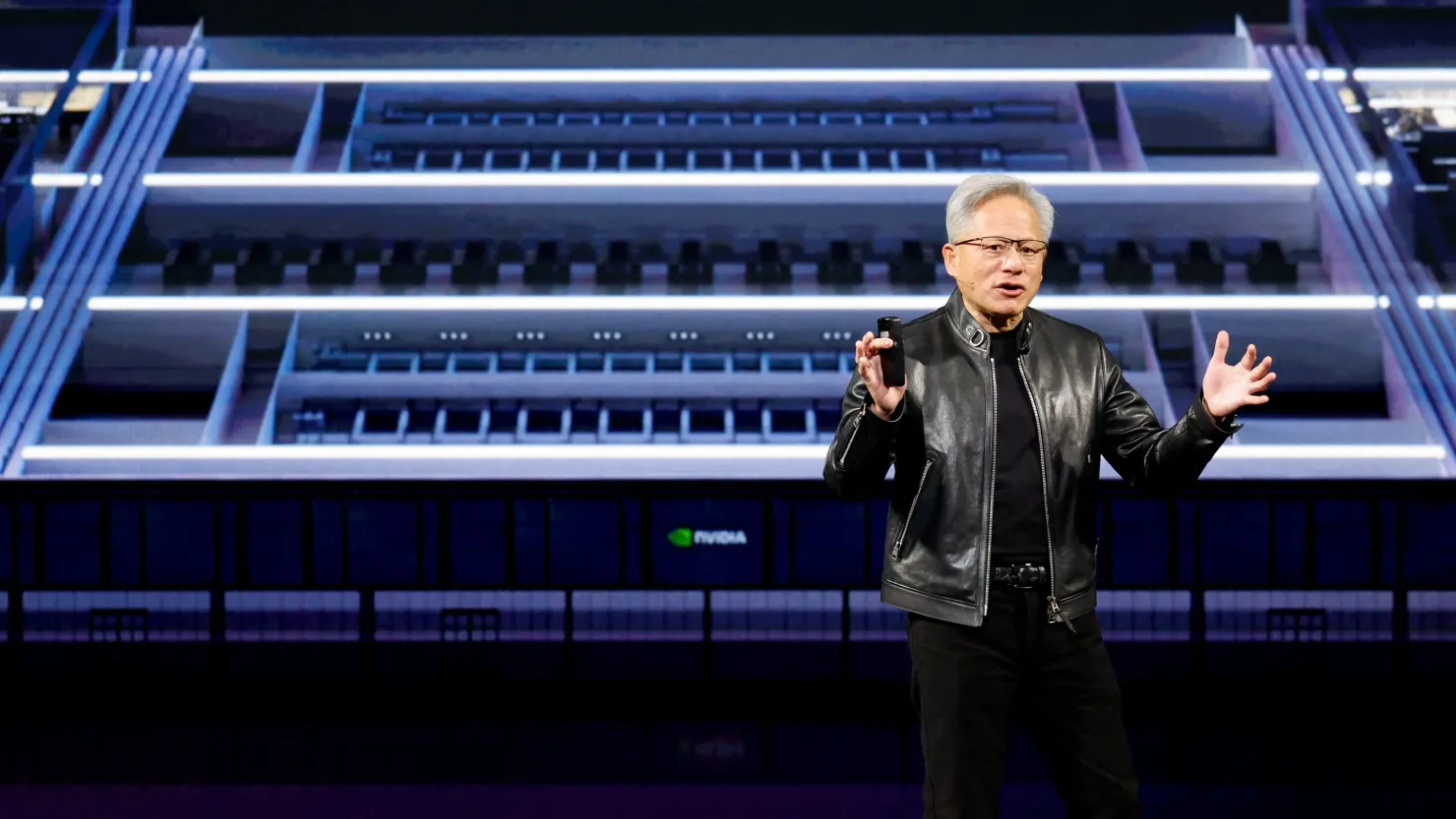

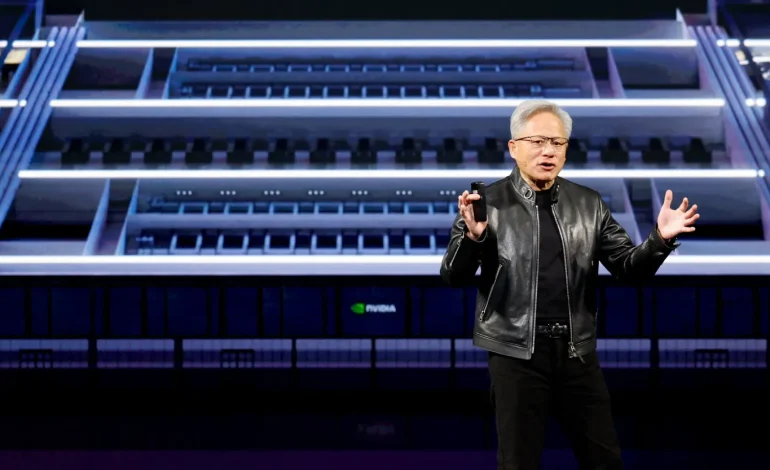

Nvidia’s latest chips are getting so much faster and more efficient so quickly that it almost feels like the usual rules of hardware — you know, physics and thermodynamics — are being dared to catch up. CEO Jensen Huang told investors he expects the company to pull in “at least” $1 trillion from its newest chips through 2027, and recent quarterly numbers (record sales and eye-popping data-center orders) suggest the market’s buying that pitch.

Here’s the weird math: these are tiny silicon tiles, but each generation packs dramatic gains in performance-per-watt. That matters because data centers don’t just need compute — they need power and cooling. If chip efficiency stalls, so does the data-center boom. If it keeps improving like this, whole new AI workloads stay affordable and scalable.

Industry trackers show Nvidia’s dominant footprint has loosened a bit as rivals scale up. Research from SemiAnalysis (via data compiled by Epoch.ai) notes Nvidia’s cumulative share of installed AI compute slipped from essentially 100% in early 2022 to about 65% by Q4 2025 — with others like Google, AMD, Amazon and Huawei all carving out slices of the pie. Competition is heating up, but the performance march is still led by the original juggernaut.

Why does efficiency matter so much? Because electricity becomes heat, and heat needs to be removed. More chips running hotter means more power, bigger cooling plants, and higher bills — fast. That’s why Nvidia’s architecture shifts (think Blackwell and company) are being watched like rocket launches: small tweaks in chip design translate into big savings on the rack and datacenter level.

Josh Parker, who runs sustainability for the company, puts it bluntly: efficiency isn’t a side benefit — it’s the ceiling. Better chips let customers do more without blowing past power and cooling limits. But as Parker (and others) point out, better efficiency frequently drives higher overall usage — the old Jevons paradox — so total AI energy demand has kept climbing even as chips get leaner.

Another wrinkle: most of the big wins so far have been on training — building giant models — where Nvidia’s hardware shines. The next frontier is inference — running models cheaply at massive scale — and that’s a different efficiency problem. As investor and commentator Paul Kedrosky warned, inference is “incredibly threatening” to any vendor that can’t pivot to ultra-low-cost serving. In short: training dominance doesn’t automatically buy you inference dominance.

The industry is scrambling on the cooling front. Traditional air-cooled facilities still work, but liquid cooling and more exotic systems are becoming necessary to wring performance out of dense racks. Motivair’s liquid-cooling lead, Rich Whitmore, says chips and servers are useless without power and cooling — and that the infrastructure piece is as important as the chip itself.

Inside Nvidia’s shop, engineers like Dion Harris point to architectural redesigns in each new family of processors that squeeze more compute into the same power budget. Those gains are what let hyperscalers keep ordering more kit without instantly tripling cooling costs.

If chip efficiency were a dial that only saved energy, life would be simple. Instead, efficiency is a growth accelerator. It lowers the cost-per-inference or cost-per-training-step, and companies then run more models, try bigger applications, and push AI into places it couldn’t economically live before. That feedback loop is why the energy footprint of AI keeps growing even as chips get smarter.

Nvidia’s race isn’t just about squeezing more FLOPS into a square millimeter. It’s a battle over how far society can bend the laws of physics with clever engineering before power and cooling become the choke points. For now, the company — and its rivals — are still finding ways to outrun the limits. Whether that keeps working is the billion-dollar question for data centers, cloud customers and anyone watching the AI boom.

The latest news in your social feeds

Subscribe to our social media platforms to stay tuned