Court Losses Pile up for Meta as Child Safety Rulings Shake Big Tech

CNBC, the New York Times, and BBC contributed to this report.

It’s been a rough stretch for Meta – in courtrooms, on Wall Street, and in the broader fight for public trust.

The company took two major legal hits this week, both tied to how its platforms handle child safety. Together, the rulings are starting to look like something bigger than a bad week. More like a turning point.

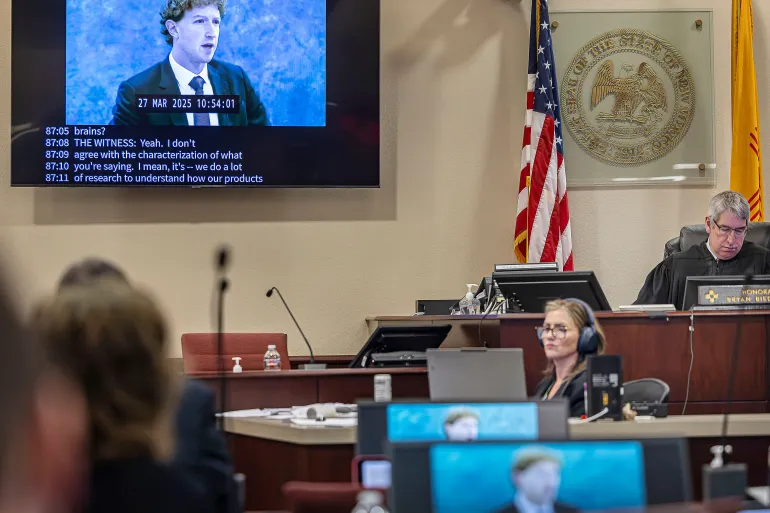

In New Mexico, jurors found Meta misled users about how safe Facebook and Instagram really are for kids, especially when it comes to online predators. A day later in Los Angeles, another jury went further, ruling that Meta and Google’s YouTube played a meaningful role in causing mental health harm to a teenage user. The case ended with a $6 million payout, with Meta on the hook for most of it.

For Timothy Edgar, a lecturer at Harvard Law School, the message is clear: this is “a major watershed event.” In his view, it signals a real shift in how Americans see Big Tech – less benefit of the doubt, more willingness to hold companies accountable.

The financial penalties themselves won’t dent a company of Meta’s size. Even the larger $375 million judgment from New Mexico is small change for a business pulling in tens of billions in profit each year. But the legal precedent? That’s where things get uncomfortable.

More lawsuits are already lined up. Some are expected to test whether social media platforms can be held responsible not just for content, but for how their products are designed – the endless scrolling, the algorithmic nudges, the features critics say are built to hook younger users early.

That idea is gaining traction. One legal expert called this moment the tech industry’s “Big Tobacco” phase – a comparison that’s hard to ignore, given how those cases reshaped an entire sector.

Meta, for its part, isn’t backing down. The company says it will appeal both rulings and argues that no single app can be blamed for complex issues like teen mental health. Google has taken a similar stance, insisting YouTube doesn’t even fit the traditional definition of a social network.

Still, the pressure is building. Lawmakers are circling, and Section 230 – the legal shield that protects platforms from liability over user content – is once again in the spotlight. Some in Washington now see these verdicts as fuel for rewriting or even scrapping the law altogether.

That could change the internet as we know it.

Even supporters of tougher regulation admit there’s a trade-off. Rein in platforms too aggressively, and you risk creating a more cautious, tightly controlled online world. Leave things as they are, and the concerns around harm – especially for younger users – keep piling up.

Meanwhile, investors have their own worries.

Meta’s stock has slipped more than 2% over the past year, lagging most of its megacap peers. Only Microsoft has done worse. The drag isn’t coming from lawsuits – it’s Meta’s expensive and still uncertain push into artificial intelligence. The company is planning to spend as much as $135 billion on infrastructure this year, even as rivals like Google and OpenAI pull ahead.

Layoffs haven’t helped the mood. Hundreds of jobs were cut this week, including roles in Reality Labs, the division handling virtual reality and AI-powered devices. That follows earlier cuts that already trimmed about 10% of the unit.

Put it all together and the picture gets messy. Legal challenges on one side, investor skepticism on the other, and a fast-moving AI race in the middle.

What’s happening in the courts may end up mattering most. These cases are starting to redefine responsibility in the social media era – not just what platforms host, but how they’re built and who they affect.

And if this week is any indication, the rules are beginning to change.

The latest news in your social feeds

Subscribe to our social media platforms to stay tuned